The Lord of the Words: The Two Frameworks

At PyCon 2023 in Spain we explored a fascinating world of Artificial Intelligence through the lens of Transformers: these models have revolutionised the way we understand and work with Natural Language Processing (NLP) and even other fields, and in this keynote, we dive into their architecture, advances in the field, exploration into the task of translation, and the exciting comparison of two of the leading Open-Source AI frameworks: Tensorflow and Pytorch, within the context of the HuggingFace Transformers library.

Transformers: Beyond Words

The Transformer brings with it a key underlying innovation: the self-care mechanism. This allows us to better understand the context within a sentence and gives the model the ability to focus.

Fig1. In this sentence, we find that the word "right" means different things depending on its context. The self-attention mechanism makes it possible to differentiate between the correction of "right" and the direction of "right" to exactly where Moria is.

Beyond the numerous demonstrations and small projects, we are witnessing the release of pre-trained models through Open-Source and the mass adoption of AI in software products, where both development and AI techniques are being standardised through new tools and processes.

We are witnessing the release of pre-trained models through open source and the massive adoption of AI in software products, where development techniques and Artificial Intelligence are being standardized through new tools and processes.

As a guide in this exploration, we use HuggingFace's valuable Transformers library, which has become the "Rivendell of Machine Learning": like Frodo and Sam venturing into the mountain of destiny, we too venture into the engineering of pre-trained linguistic models. But instead of a ring, we carry with us the ability to carry pre-trained models for use in various tasks.

We explored at PyCon experiments with the library in the context of two different supported frameworks: Tensorflow and Pytorch. While there are similarities at the conceptual level, the real beauty lies in the syntactical differences between the frameworks, which reflect how the same concepts are implemented in a unique way.

Like "The Lord of the Rings" characters who encounter different races and cultures on their journey, we will also discover the peculiarities of these two AI worlds.

Differences at Network level

In Tensorflow, operations are defined before executing the graph with tensors and operations, and to run them we start a session with tf.Session() to optimise the computations. In Pytorch, operations are executed immediately as they are defined, which facilitates iterative development. It is worth noting, however, how Tensorflow has evolved to incorporate "eager" execution in imitation of Pytorch.

Fig2. In Tensorflow, we have to start a graph with tf.Session(). The framework has also mutated into eager mode. In Pytorch, from its conception, we don't need a session.

Differences at Operations Calculation level

Automatic differentiation in Tensorflow is achieved through a tape context with tf.GradienTape(), while in Pytorch it is inherent in the framework thanks to Autograd. This means that in Pytorch, automatic differentiation occurs without the need for additional context. Like the flow of power between Saruman and Gandalf, the process of differentiation manifests itself differently in these two frameworks.

Fig3. In Tensorflow, we have to start a graph with tf.Session(). The framework has also mutated into eager mode. In Pytorch, from its conception, we don't need a session.

Device Management

Tensorflow uses tf.device() to specify the device on which a block of code will be executed, while Pytorch uses .to(device) to move objects to a specific device. Both approaches offer control over the device but vary in the way they are implemented. Like the choice of weapons in an epic battle, the choice of devices in these frameworks can determine the outcome of the performance battle.

Fig4. Pytorch assigns the device as an object.

Memory Management

PyTorch offers more flexible GPU memory management, allowing incremental and proportionate use with methods such as memory_allocated() and memory_reserved(). On the other hand, Tensorflow allocates the entire GPU by default. While Pytorch's flexibility provides more granular control over resources, Tensorflow seems to be exploring these features with the tf.config.experimental class.

Fig5. In TensorFlow, we use the configuration class to learn more about memory.

Conclusions of the trip

Although the library supports both frameworks, it seems that Pytorch is the native framework: the definition of many of its classes with Pytorch, the fact that Pytorch points directly to HuggingFace, and the overwhelming number of models in Pytorch (100K) versus approximately 10K in Tensorflow - despite the fact that many of the pre-trained models are in both frameworks - attest to how the library seems to have been integrated in harmony with the Framework.

During our exploration, we have discovered that from tutorial to example script there is a significant leap. While the tutorial has a very useful comparative mentality between the two frameworks and is extremely didactic, the examples folder in the library's GitHub repository is certainly a solid resource. The script structure and semantics with respect to the two frameworks are quite similar.

In addition, it is essential to review the documentation in the README.md of the tasks in the HuggingFace Transformers library to understand the models and datasets in the examples, as well as to verify which framework supports the model in the Hub. In addition, we have noticed that the integration with experimentation platforms in PyTorch is thoroughly documented and provides flags that facilitate the change of models and datasets.

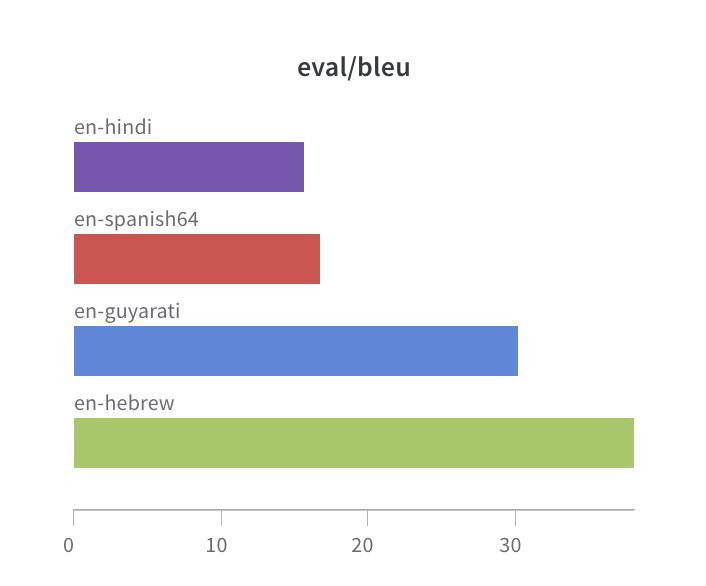

Fig6. Results. 192 total downloads from the Hub of the pre-trained models, resulting from the scan. The best model according to the BLEU metric seems to go for the model that translates from English into Hebrew.

The Magic of Open-Source

Preparing the talk and exploring the library in the two Frameworks has also served to contribute to an example in a python docstring to one of the classes of the library within a good first issue, in which I have been able to learn about the evolution of a class in the context of Open-Source, meeting amazing contributors - my eternal gratitude to you, nablabits - and to prepare contributions to the library, as well as to analyse possible use cases.

Fig7. How we close an issue is just as important as how we open it

Contributing to Open-Source is not only a generous act, but also a valuable investment in personal and professional growth. In the world of Open-Source software, there is a constant dance between give and take. Many of us use Open-Source and build our solutions and mental models on the work of others, which has made me reflect on many levels on the duty to give back to the community. By collaborating on Open-Source projects, we share our knowledge and skills with the community, contributing to the advancement of technology and creating more robust and reliable solutions. In return, we get the opportunity to learn from others, improve our own skills and become part of a global community of creative minds. This balance of giving and receiving is reminiscent of the Fellowship of the Ring, where each member brings his or her unique skill to achieve a common goal, and in the process, all are strengthened and move towards victory over the forces of darkness. Likewise, by giving, we can help forge a brighter and more resilient technological future.